|

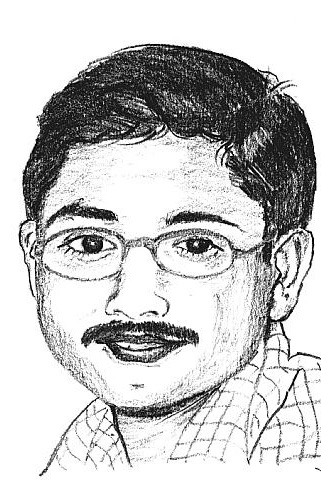

Amitabha Ghosh

Research & Engineering, Core Networking

|

2010-2012: Postdoc, Princeton

|

Specialities:

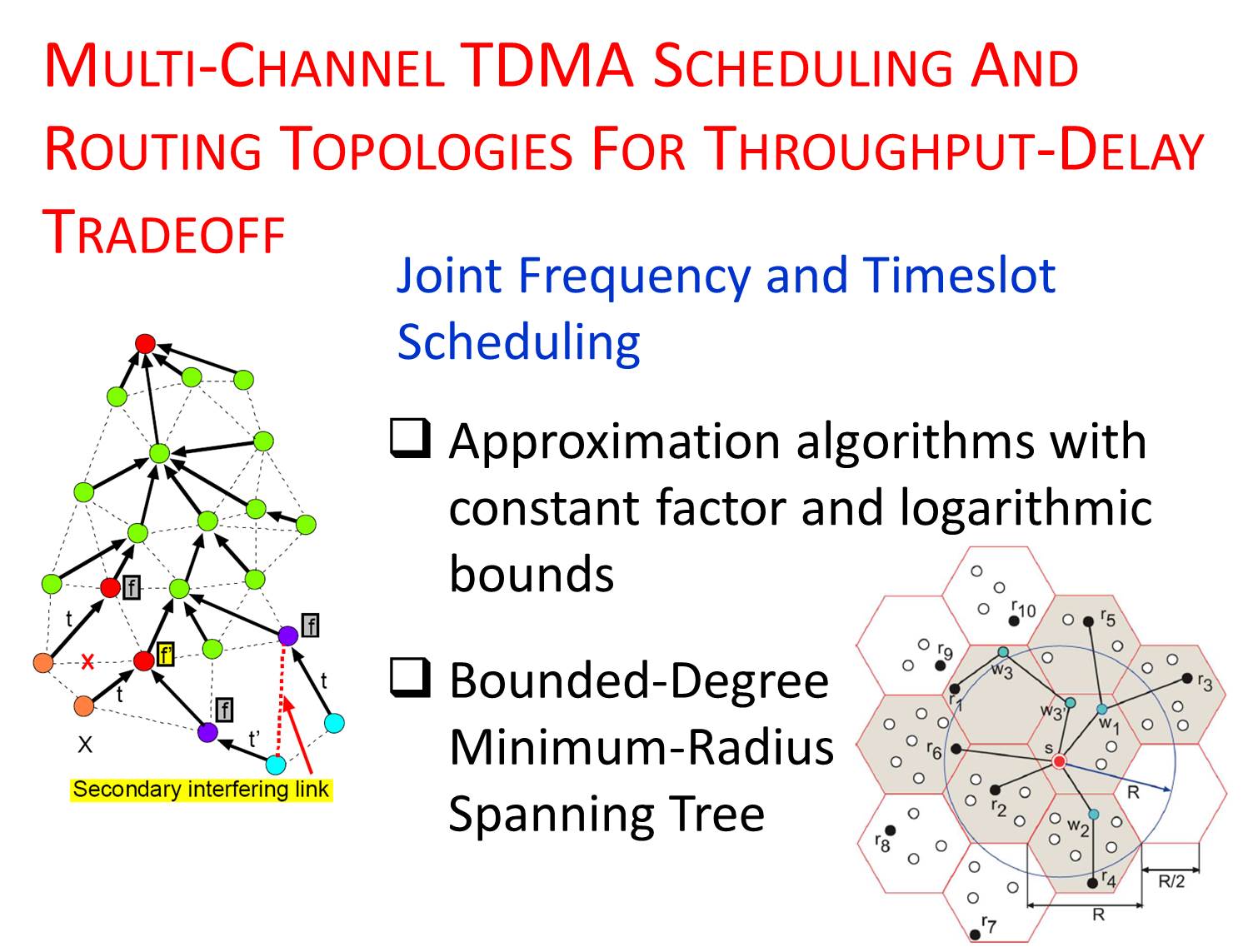

I got my Ph.D. in Electrical Engineering from the USC Viterbi School of Engineering in 2010, and was a postdoc at Princeton University from 2010 to 2012. At USC, I was part of the Autonomous Networks Research Group, and was advised by Prof. Bhaskar Krishnamachari. At Princeton, I worked in the EDGE Lab with Prof. Mung Chiang and Prof. Jennifer Rexford. In my dissertation, I worked on designing approximation algorithms for multi-channel scheduling, power control, and routing topologies to analyze throughput-delay tradeoffs for fast data collection in sensor networks (Thesis).

Prior to getting my Ph.D., I spent four years in the industry working on GSM/GPRS technologies at Lucent - Bell Labs, and on ad hoc networks at Honeywell. I got an M.E. in Computer Science and Engineering from Indian Institute of Science, Bangalore, and a B.Sc. (Hons.) in Physics from Indian Institute of Technology, Kharagpur.

In the Spring of 2014, I had the opportunity to be a Guest Faculty at USC and teach a graduate course, EE 579: Wireless and Mobile Networks Design and Laboratory.

(Click here to read some of the published articles of my wife.)

As the Internet becomes increasingly popular for sharing multimedia content, we observe a widening gap between how content is produced/distributed and how it is transported over the network. On one hand, content providers (e.g., media companies, end-users who post video online) seek the best way to distribute content through technologies (e.g., multimedia signal processing, content caching, relaying, and sharing) by viewing the network simply as a means of transportation through bit-pipes. On the other hand, pipe-providers seek the best way to meet end-user requirements by managing resources on each link, between links, and end-to-end. In other words, content-providers assume that the communication pipes are dumb, whereas pipe-providers assume that all bits are equal, thus creating a content-pipe divide. The players involved in this are Internet Service Providers (ISPs), peer-to-peer (P2P) system providers, content distribution network (CDN) providers, equipment vendors, and, of course, consumers and producers of content.

Multimedia processing, and, in particular, video processing presents a set of degrees of freedom that are traditionally designed in isolation from how the resulting packets are transported over a network. Content-aware networking refers to the methodology of utilizing the rate-distortion (RD) characteristics of the content to design more adaptive and efficient network protocols. It advocates a cross-layer design philosophy where network resources are allocated and provisioned with optimality criteria that are reflective of the content itself.

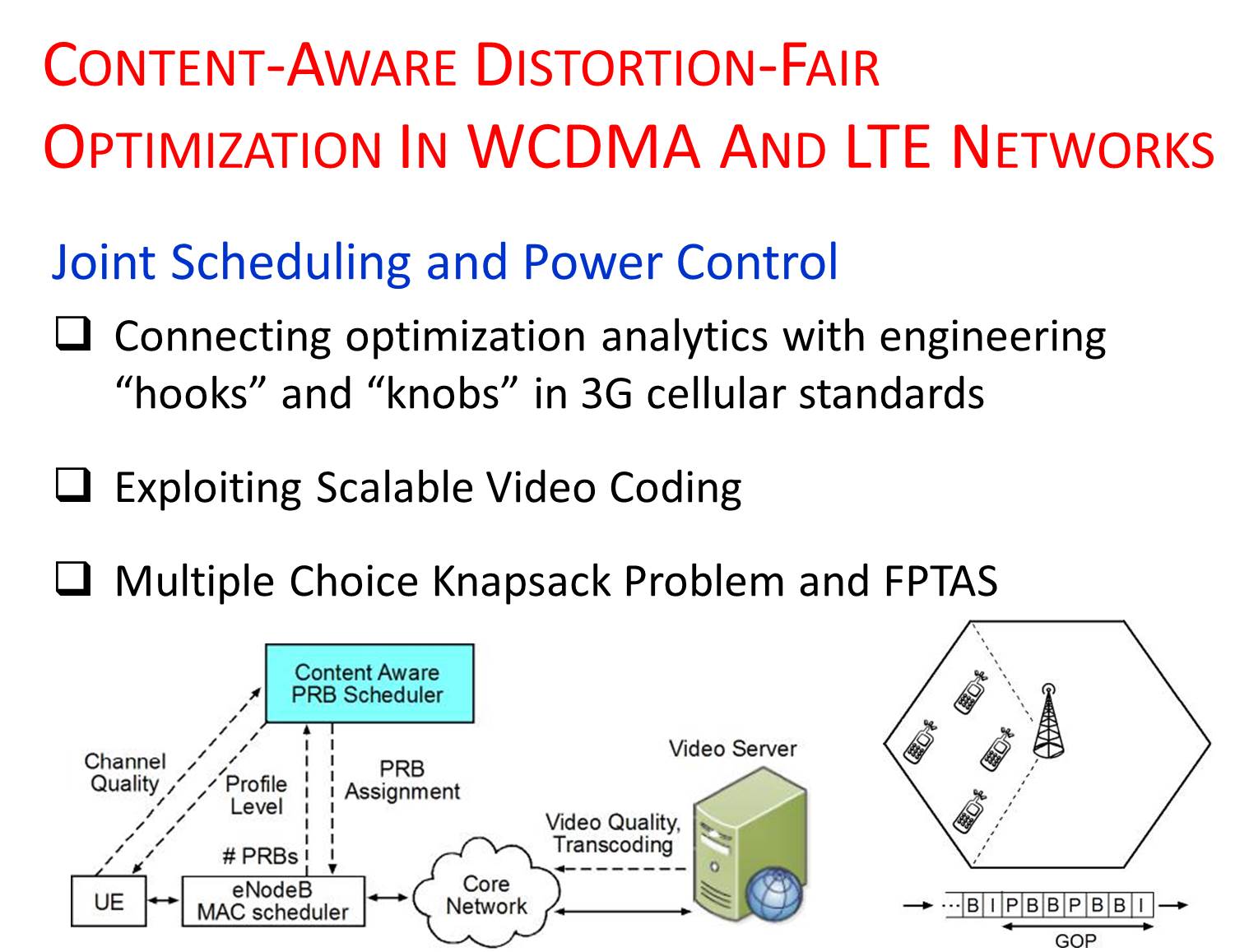

In this work, we consider cellular networks (WCDMA and LTE) and develop techniques to exploit content characteristics (e.g., video encoding, frame type, GOP structure, etc.) for allocating resources based on the importance of video packets. To narrow down the gap between content-aware networking and its practical adoption in 3G cellular systems, our work focuses on connecting the analytics of content-aware optimization with the specifics of engineering hooks and knobs in 3G cellular standards, as well as on implementing them in an industry-grade Qualcomm system emulator that offers a configurable and realistic test-bed to validate the theory. We also propose a signaling mechanism to incorporate the content-aware techniques into existing LTE systems without much overhead. Our techniques are validated by real-world measurement data collected in both indoor and outdoor environments from ATT and TMobile networks. We have also implemented some of this work in testbed experiments using reconfigurable software defined radios (SDR) such as WARP boards.

link

link

In the recent past, we observed two conflicting trends emerging in ISP data plans and the use of multimedia services over cellular networks. About 30% of the Internet traffic belongs to just one video distrobutor: Netflix. And together with YouTube, Hulu, HBO Go, iPad personalized video magazines, and news webpages with embedded videos, video traffic is surging on both wireline and wireless Internet. We have also observed several ISPs, such as ATT Mobility and Verizon Wireless, capping their unlimited data plans and charging dollars per GB once a baseline quota is exceeded during a monthly billing cycle. Tiered pricing, or usage-based pricing, is becoming increasingly commonplace in many markets and countries. For example, beginning in July 2011, ATT's high-speed Internet service began charging $2/GB beyond a 250 GB baseline allowance. In Canada, the numbers are even steeper: $2/GB beyond a 25 GB quota.

These two emerging trends in Internet applications: video traffic becoming dominant and usage-based pricing becoming prevalent, are at odds with each other. On one hand, videos, especially on flat screens and high-resolution tablets, consume much more data than other traffic types. On the other hand, usage-based pricing threatens the entire business model of delivering entertainment via IP and LTE. We ask: "Is there a way for users to stay within their target data quota without suffering too much degradation in perceptual video quality?"

In this work, we develop QAVA, a Quota Aware Video Adaptation solution, that can significantly mitigate this conflict by leveraging the compressibility of videos and profiling the usage behavior of consumers over a billing cycle. If a consumer wants to avoid missing some videos, QAVA tries to save money for the consumer with a minimum impact on the quality. Or, equivalently, QAVA enables a consumer to watch more videos under a given target monthly budget while maintaining a target video quality.

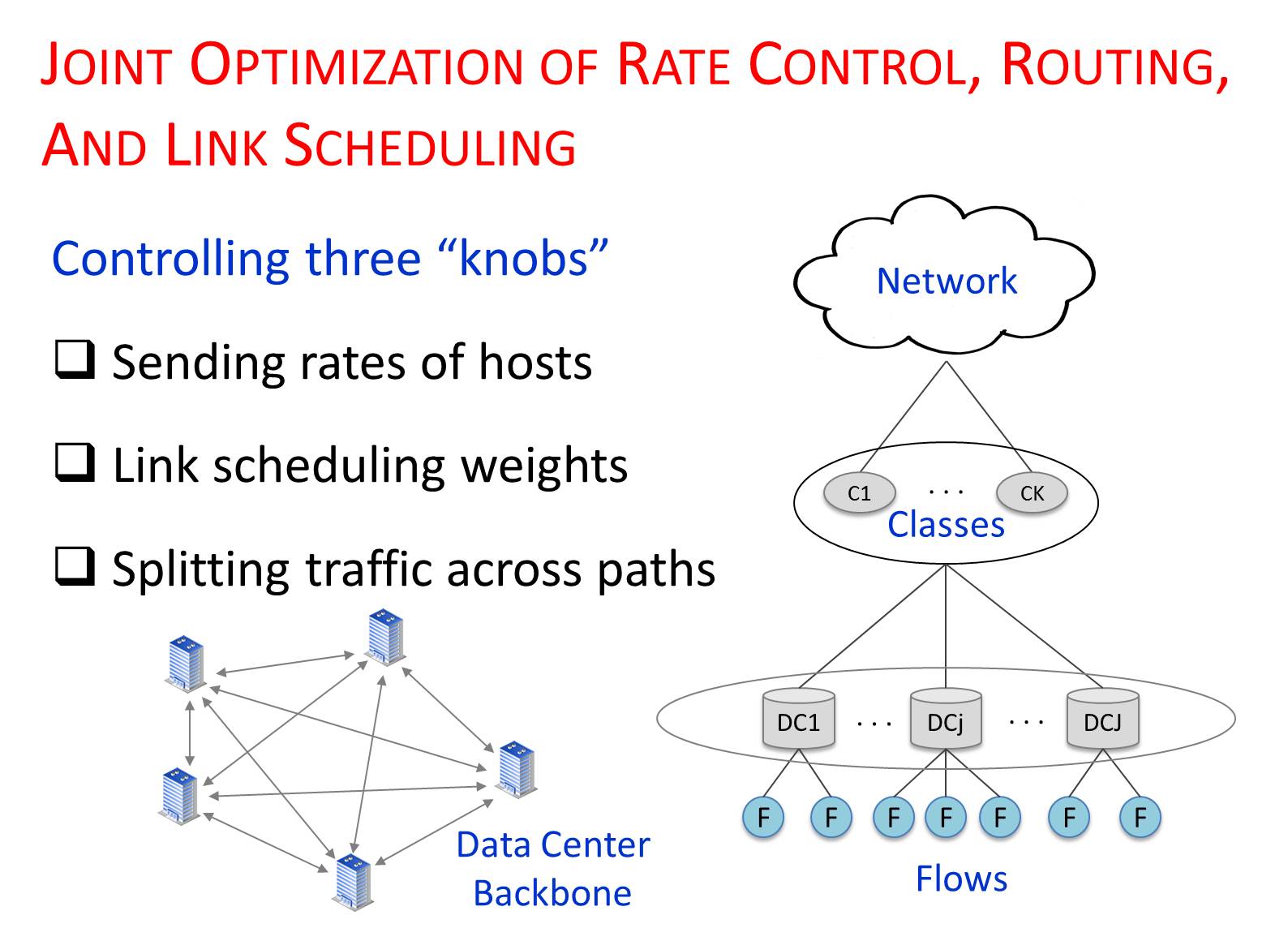

Large online service providers (OSPs), such as Google, Yahoo!, and Microsoft, often build private backbone networks to interconnect data centers in multiple geographical locations. These data centers house numerous applications that produce multiple classes of traffic with diverse performance objectives. Applications in the same class may also have differences in relative importance to the OSP's core business. As the backbones themselves represent substantial investment, it is highly desirable that they be used efficiently and effectively, while simultaneously respecting the characteristics of the traffic they carry.

Despite many challenges, these OSP traffic characteristics provide new opportunities for wide area traffic management. In traditional traffic engineering, an ISP controls backbone routing and link scheduling, but does not control how end hosts should perform congestion control. In contrast, an OSP controls both the hosts and the routers, offering the flexibility to jointly optimize rate control, backbone routing, and link scheduling. Not knowing the end-to-end performance objectives of applications, ISPs typically focus on optimizing indirect measures of performance, such as minimizing congestion. In contrast, an OSP cna maximize the aggregate performance across all applications, with a different utility function for each traffic class.

Motivated by centralized traffic engineering being increasingly viewed as a viable approach to wide area networks (e.g., Google's B4 software defined wide area network), in this work, we present two semi-centralized architectures that are scalable and practical to jointly optimize rate control, routing, and link scheduling in data center backbone networks. We achieve scalability by distributing computation across multiple tiers of the design using a few management controllers. Using optimization decomposition, we show that both designs are provably optimal, and show tradeoff in terms of rate of convergence vs. message passing.

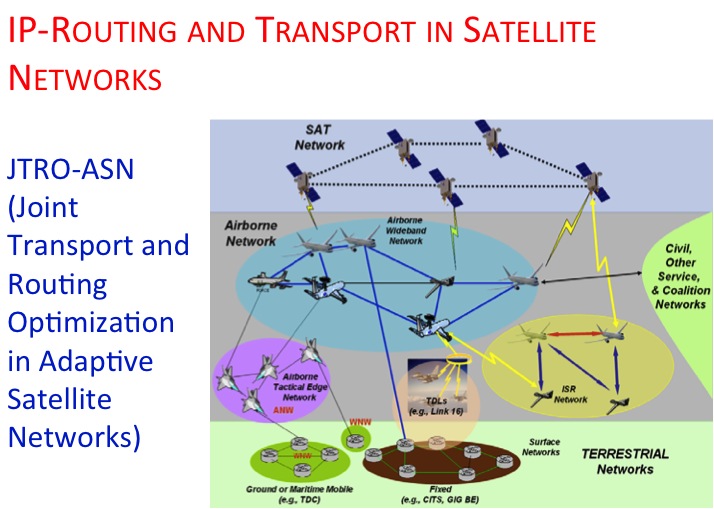

Efficient routing strategies combined with robust transport protocols are paramount in new generation satellite networks which encompass diverse constellations located at different orbits. Compared to older bent-pipe satellite systems with no onboard IP-routing and processing schemes, the newer generations offer a lot more flexibility. Accordingly, the impact of cross-layer approaches and joint optimization of routing and transport protocols need to be investigated for heterogeneous GEOstationary (GEO) + Low Earth Orbit (LEO) satellite networks.

In this work, we propose a joint transport and IP-routing optimization framework for adaptive satellite networks (JTRO-ASN). The framework establishes end-to-end routes in space exploiting inter-satellite and inter-level SATCOM links in different orbits and constellations. It provides reduced ground station dependencies, decreased end-to-end latencies, and support for traffic prioritization, and distributing traffic load via multiple available routes. To establish routes in the space, it uses predefined satellite orbits to predict the topology and to calculate routes. Furthermore, JTRO-ASN shares relevant information across layer boundaries to achieve resource utilization and protocol optimization to cater to different QoS requirements of different traffic types.

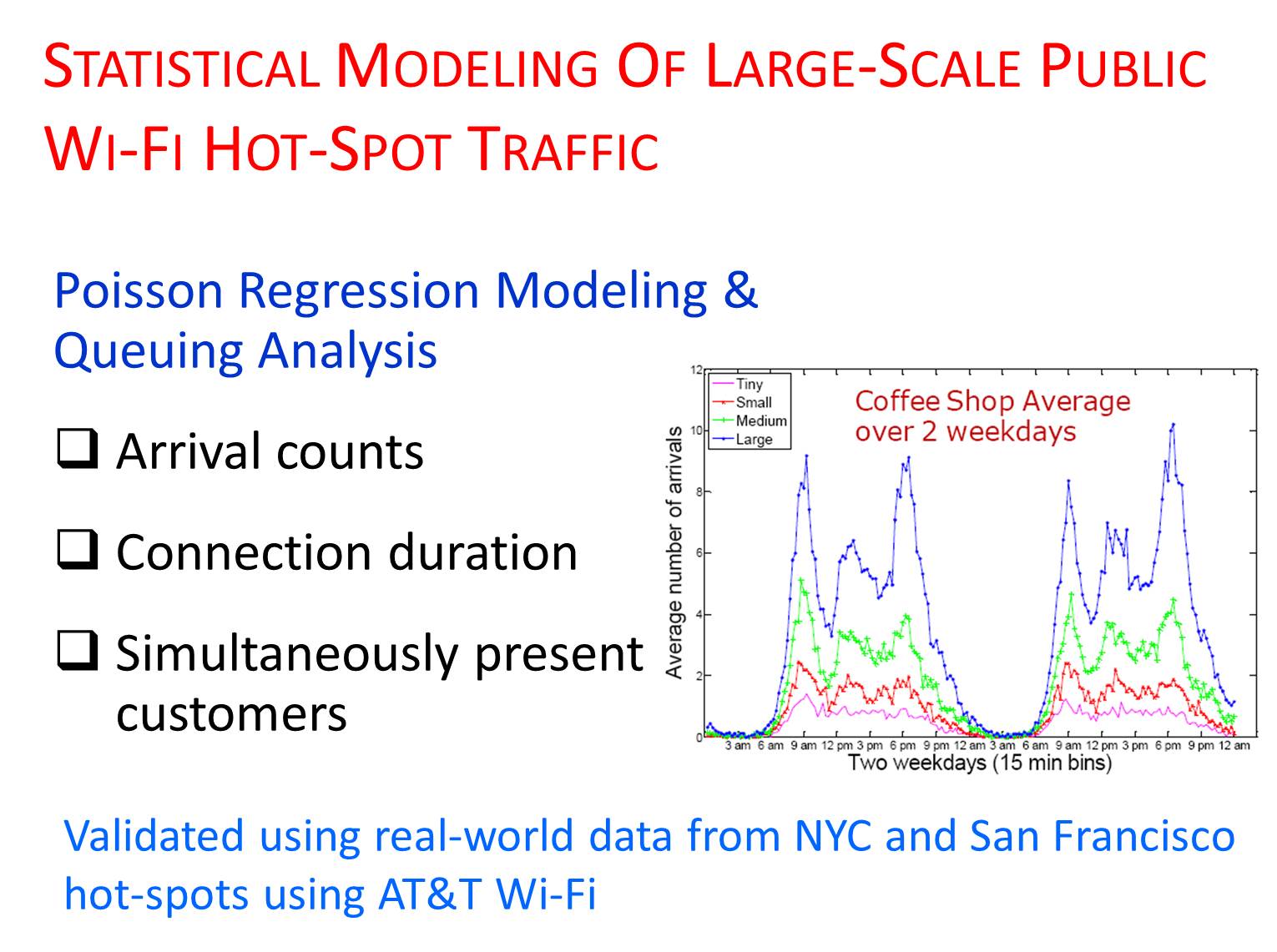

The ubiquitous deployment of wireless hot-spots (e.g., coffee shops, book stores, malls, hotels, etc.) has drawn millions of users, providing smaller coverage footprints at considerably higher bandwidth. This has motivated the need to understand traffic characteristics in large-scale Wi-Fi data networks. Previous studies in this area of "workload characterization" are based on either a limited set of users under laboratory conditions, or over a short period of time with small number of users, typically by active monitoring at venues like technical conferences. However, understanding these Wi-Fi networks that are deployed publicly and operated without any regulation on user behavior is critical to gain insights on their workload pattern and network capacity planning.

In this work, we develop a common modeling framework to characterize customer arrival patterns, connection durations, and the number of simultaneously present users in public hot-spots. We combine statistical clustering with Poisson regression to fit a non-stationary Poisson process to the arrival counts and demonstrate its remarkable accuracy. We also model the heavy-tailed distribution of connection durations through fitting a Phase Type distribution to its logarithm, and finally, use queuing models to find the distribution fo the number of simultaneous customers. This work uses real-world measurement data collected from large-scale public hot-spots using AT&T networks in New York City and San Francisco duirng the months of March to May in 2010.

The practical utilities of our models and methods are several. They help in capacity planning for sites where decisions are based on vendor or network provider estimates of equipment capacity, stated in terms of simultaneously present users instead of detailed low-level data on a continuous basis. The models are also useful in the context of network monitoring for detection of changes in traffic patterns, intrusion, etc. The ability demonstrated in this work to model diverse public hot-spots with a common class, as well as large sub-clusters of venues within a single business type with a common set of parameters is particularly useful in practical scenarios.

Fast and periodic collection of aggregated data is of considerable interest for mission-critical and continuous monitoring applications in sensor networks. Although sensor networks have been traditionally used for low data rate monitoring applications, recently they are being considered for more complex and mission critical applications (e.g., target tracking, structural health monitoring, health care), requiring efficient and timely collection of large amounts of data. Considering the low bandwidth of sensor radios, interference and contention in the wireless medium, and energy efficiency requirements due to limited power supply, fulfilling such time-constrained high-rate data collection becomes challenging.

In this work, we designed efficient algorithms for (1) scheduling using multiple orthogonal frequency channels, (2) transmission power control, and (3) constructing optimal routing topologies to enable fast data collection in sensor networks. We refer to the data collection process as "convergecast," and demonstrate the trade-offs between throughput and delay inherent to this process and in the algorithms enabling such data collection. We also prove several worst-case performance bounds for the algorithms using graph theoretic tools.

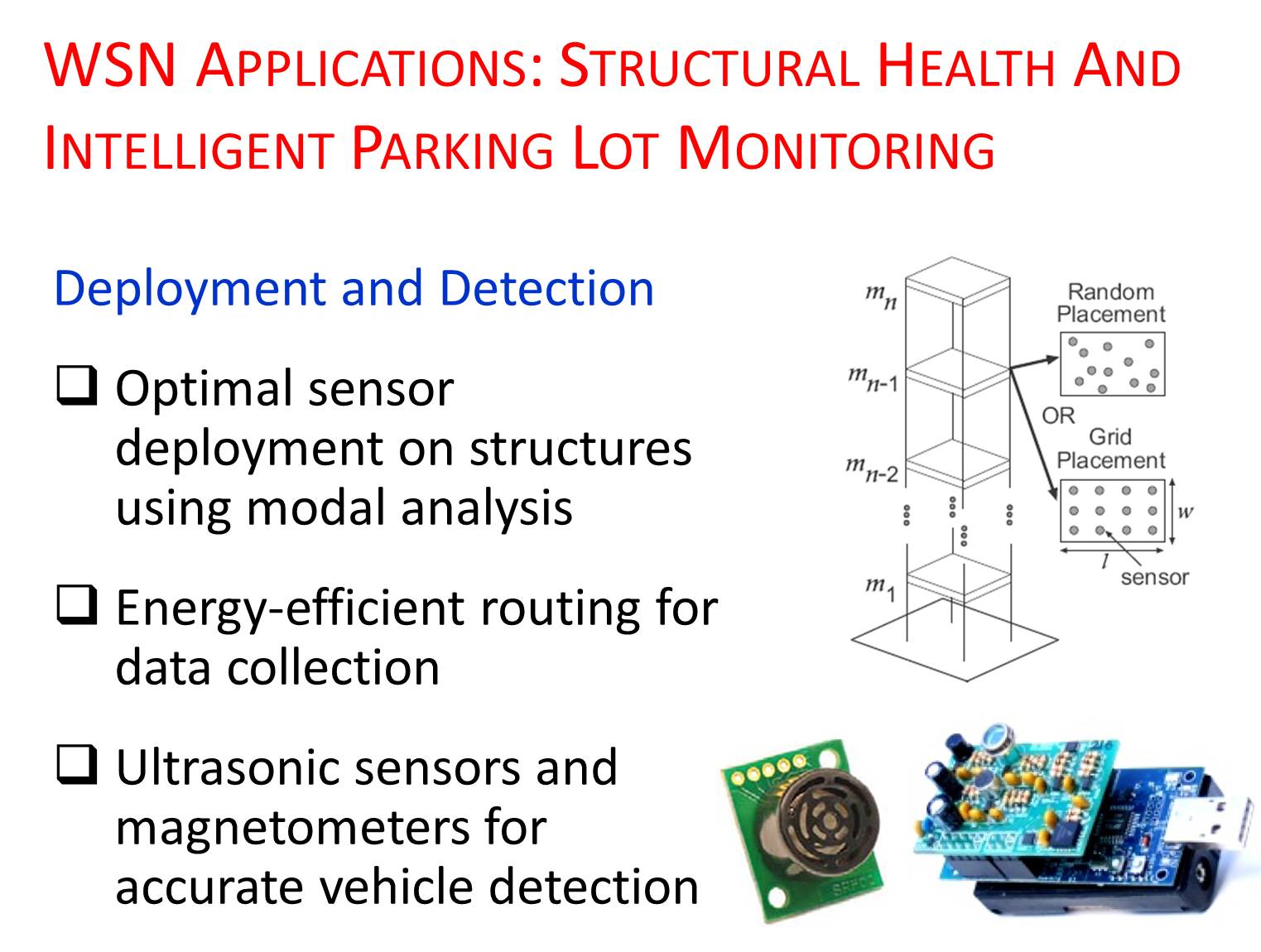

Reliable wireless SHM systems capable of predicting failures in structures through non-destructive diagnosis procedures can have substantial societal and economic benefits. Several catastrophes in the recent past (e.g., I-35 Mississippi river bridge collapse in 2007; building collapse in Dhaka, Bangladesh in 2013; I-5 Skagit River bridge collapse in Washington in 2013, etc.), as well as numerous "fracture critical" bridges in the U.S. underscore the importance of reliable monitoring systems in analyzing structural damage and deterioration processes. In this work, we design an optimal sensor placement strategy on building structures using mode shape analysis, and an energy-efficient routing scheme to enable fast data collection. We show that the optimal locations exhibit a tradeoff with the cost of data collection. On one hand, sensors should be placed with enough separation between them to increase the accuracy of structural modal parameters estimation. On the other hand, large separations increase the cost of data collection. We suggest a compromise by defining an objective function with weighing factors on energy consumption and estimation accuracy.

With the increasing growth of automotive industry, the demand for intelligent parking service is expected to grow rapidly in the near future. This emerging service will provide automatic management of parking lots by accurate monitoring and making that information available to customers and facility administrators. In this work, we show that the use of a combination of magnetic and ultrasound sensors can accurately and reliably detect vehicles in a parking lot. We conduct extensive real world experiments in a multi-storied university parking space, and demonstrate the efficacy of the proposed approach by an elaborate car counting experiment lasting over a day.

In this work, we develop methods using computational geometry tools such as Voronoi diagrams, orthographic projections, and spherical Delaunay triangulation to provide effective coverage, connectivity, and distributed topology control mechanisms. This work is one of the first ones to consider opportunistically deploying both static and mobile nodes to enhace coverage and connectivity in a hybrid sensor network. The 3-D topology control technique developed is algorithmically simpler, has much lower complexity and fast running time, and produces networks that utilize minimal transmit power to reduce interference, while guaranteeing connectivity using local geometric information.

During my stay at USC, I collaborated with a nonprofit organization called Iridescent that works on science and engineering education at schools in Los Angeles, Bay Area, and New York. Their philosophy is summarized in the following quote which is deeply consonant with my view as well: "If you want to build a ship, don't drum up the people to gather wood, divide the work and give orders. Instead, teach them to yearn for the vast and endless sea." - Antoine De Saint-Exupery.

As part of the "Engineers as Teachers" fellowship program, I had volunteered to teach for two semesters in the Garfield High School in Los Angeles. My students came from the under-privileged society having learning disabilities. I taught them a basic course on electromagnetism and wireless sensors. Following are some of the course materials I had designed and used in class. Some more information on these outreach activities is available here.

When one line of enquiry reaches an end then another approach is needed, often from a completely new direction. This is where the lateral thinking comes in. Some people find it frustrating that for any puzzle it is possible to construct various answers which fit the initial statement of the puzzle. However, for a good lateral thinking puzzle, the proper answer will be the best in the sense of the most apt and satisfying. When you hear the right answer to a good puzzle of this type you should want to kick yourself for not working it out!

This kind of puzzle teaches you to check your assumptions about any situation. You need to be open-minded, flexible and creative in your questioning and able to put lots of different clues and pieces of information together. Once you reach a viable solution you keep going in order to refine it or replace it with a better solution. This is lateral thinking!

This list contains some of the most renowned and representative lateral thinking puzzles as well as some of those which crop up most frequently on the net. Lateral Puzzles Forum